6/24/2009

Stones versus Guns

Sounds really, really bad. There's no way to say that without sounding understated. I've also seen pictures of axe wounds. Many sources claim the Basiji were wielding axes.

Fascist fucking thugs. As long as groups attempt to wield power, there will always be these little-man shits. I would call them animals, but that is a horrible thing to compare an animal, simply living out its nature, to these despicable wastes tickling their feedback circuits carving their violent psychotic world-views into other people's flesh.

I was listening to an unrelated interview with a biographer of Stanley Milgram today. Milgram is the scientist who did the "shock" experiments; the subject is told he is to administer "electric shocks" to an actor, on the authority of a researcher. Even though the actor pretends s/he is in incredible pain, 2/3's of the time the subject continued to give the "shocks", even though s/he was free to get up and leave at any time. The biographer said, when asked about the relevance to WWII and the Holocaust, that the experiment actually doesn't really explain Nazi behavior. It might explain the behavior of the train operators, taking jews to the camps, but the experiment cannot say anything about the creativity of torture--the soldiers who would compete with each other to torture more brutally, and the doctors who devised psychotic medical experiments.

I thought the same thing around the time of Abu Graib. I'm certain those soldiers were told that their torture was okay, but no one told them specifically what to do, nor to take photos of it, and put them on the Internet. Creativity--or what rudiments of it these people could find within their rotted minds.

There are certain people out there, who not only find themselves in the employ of fascist or fascistic leaders, to do their violent dirty work, but also, perhaps not unrelatedly, are also the sorts of psychotic power-thugs that get off on it. Is it enough to shoot people? No. They pick up axes, aim at children, and throw people off of bridges. There is no word awful enough.

As I said before, I'm always with the people. Guns against stones is what we so often see, and I think there is a reason for this. Any violent piece of shit can go get a gun and shoot people. But it is only the people that pick up stones to throw. It is not a pretty or romantic image--it is often the last act before getting gunned down. But it represents the fact of the matter--a finger on a trigger, versus the overwhelming anger of people en mass. I would throw a stone, if I could.

But this is nothing new. What is new, in some bizarre spin of the Evidence/Confession obsession of post-christian law, combined with a cyber-timed, techno-fetish Bataillesque-reincarnation, we can see these horrors playing out "in real-time", as it were. Our connection with the Internet provides a strange, more-real than real sense of the events. Of course, if we were there it would be unspeakably real. But because we are not, and can read the words of panic, and see the wailing relatives on grainy, uploaded reality footage, and know that it is not a snuff film, presented as "death in the lens", there is an uncanny realness that goes beyond theorizations of the simulacra. It is not uncanny in the sense that it is unreal; it is uncanny in the sense that it takes a medium of representation (YouTube, a normally all-too-surreal site) and forces it to be real in our minds. It is not just a depressing news reel. Looking at the video of Neda's death, I felt actual fear for myself being shot in the street. It is as if after nights and nights of hearing the neighbors get into a physical fight, one night the wall is transparent, and you can actually see them beating each other. Anybody with any sense knows that such violent crimes happen everywhere, all around the world, on a daily basis. But this is one happening NOW, and RIGHT IN FRONT OF US.

But of course it isn't actually happening live--Tehran is some twelve hours ahead of my location in time, and so I see the video after it has been uploaded, during my day time, while it may be 2AM in Tehran. It is part of the cyber-time extension--in which other places and times become co-extensive with my reality because I can access them so easily, in such "at will" format.

The problem is, there is little I can actually do. I can't go into the streets (protesting in front of an embassy is clearly not the same thing), and although one can do a bit to further the message, it is up to the people of Iran now.

I wonder what they will do. The pundits compare it to the '79 revolution--they take the shouts of "Allah Akbar" as the secret password: it is something to do with a rising spirit of Islam. Maybe a Islam different than other Islams the pundits have identified previously, but they are the Other, still.

I think it must be different. The Iranians are the ones holding the stones, but they know, regardless of what they might think about it, that we are behind them. When have the Iranians ever had the world behind them? Even if the people are defeated, or co-opted, or dissuaded, or killed, people do not forget things like this. Being assaulted by the state is not something you forget. The people are conscious of themselves, both in victory, and in defeat. When the Basiji shot Neda, they killed only one person, but they shot at millions of people. I've seen comparisons (by Americans, mind you) between Iran and 9/11. The people's wounds are the people's wounds--and like I said, the people do not easily forget.

Twitter and it's ilk, may not be the best reporting tools in the world. This may not be journalism at all. The networks are plagued by overzealous retweeters, misinformation, provocateurs, and just plain old spam. But you know what is exactly like that? A street protest. Nobody know's what is going on, but when that black line of cops starts advancing, you feel the electricity in the air. Nobody knows what's happening, but as they said once, everyone knows which way the wind is blowing.

So is this the cyber-time consciousness of the people? Are we linked in, not to information or social networks, but the mass itself? Only history will truly say, and by that time, it's really unimportant. But it is something to think about. There are right and wrong ways of doing many things. But think about the next protest, with a Twitter network dump channel, set-up in advance, showing anyone with an Internet connection what it looks like to get spit on by a cop, or get sworn at by some crazy anti-immigration protester. It's a pretty far shot from a coherent organization strategy, but it is a sort of consciousness. And consciousness might be the thing missing from activist movements for some time now.

But regardless of what it might be in the future, I know what it is now. This is consciousness; this is a way to not forget.

6/21/2009

Unstoppable

I've seen this several places, and pre-judging the video, didn't watch it. I finally did. All the way to the end, please.

It made me smile.

Now, this is something I would change the color of my Twitter avatar for.

6/20/2009

Computerized Counterpoint

I believe I have Tweeted several times, while in unconditional throes of synthesized ecstasy, my overwhelming enthusiasm for David Borden.

"Synthesized" refers to synthesizers, by the way, and not the org-chem creation of ecstasy, as in the drug. My ecstasy resulted from the electrical pulse modulation noise.

"Synthesized" refers to synthesizers, by the way, and not the org-chem creation of ecstasy, as in the drug. My ecstasy resulted from the electrical pulse modulation noise.And synthesizers refers to the archaic instrument of the future, not to a SF-induced category of as-yet-nonexistent drugs, which enable your body to meld across time-space by implanting tachyons directly into your time-cortex, via nanomachine pump. After proper mutation only, of course.

I just want to make sure we're all on the same page here.

David Borden is one of a school of musicians playing experimental compositions from the 70s into the 80s and beyond, playing with large amounts of choreographed repetition, and such techniques as counterpoint. By counterpoint, I mean the not-necessarily new technique (Bach was the original popularizer) of playing different sequences of notes that would not be harmonic on their own, but when synchronized together, they still form a musical effect.

By experimental, I mean his records are really hard to find, and are often collected by people into other experimental artists, like John Cage, Robert Reich, Philip Glass, and so on.

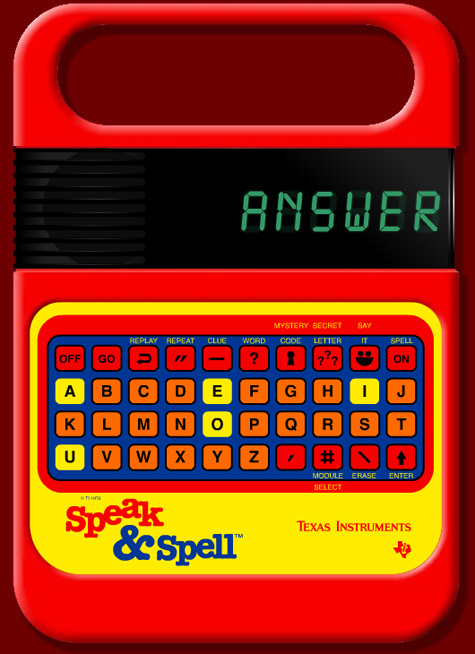

But the synthesizer! Oh the synthesizer!

It is difficult to pinpoint exactly what it is about the synth that gives Borden's music such a rare dynamism. The synth has such a varied history. It ushered in the era of "electronic" music, sounds created not from any physical vibration of strings or soundboxes, but instead vibrations in the circuit--transistor induced oscillations, resistor-sculpted sine-waves riding the harsh green light of early monitors, modulated intensities of pulse energy, pulled from its light-speed stream to flow through paper-covered magnets, condensing the background hum of the radiative universe into sounds our antiquated bodies were able to perceive.

But synth has also represented the height of cheese in music, taking the rebellion of the Moog and fitting it to the reactionary keyboard sound of pre-programmed melodies, for pre-programmed people. It became the cheap copy of music, showing up in the back of TV shows, elevators, and cheap lounges, where the digital revolution hadn't yet reached to free music from its expensive arbiters, and the cheap reproduction of brass, drums, and strings would do well enough, though slowly driving us mad through its insidious appropriation of the quiet corners in life--the silence of a waiting room, the pauses in waiting on phone lines, and the places within buildings where radio waves could not penetrate and the Internet/mobile universe had yet to explore. Porn films, muzak, and discount discos. Synth became synonymous with cheap noise, a lack of quality and a papering-over akin to bulk-purchase paint.

But listening to David Borden, this collection of faded wax begins to break down. I can feel the excitement of the future once again. The sound of the wall being broken down by new technology. The wonder and the mystery, the fear and the danger, of sounds coming from a mere jumble of wires.

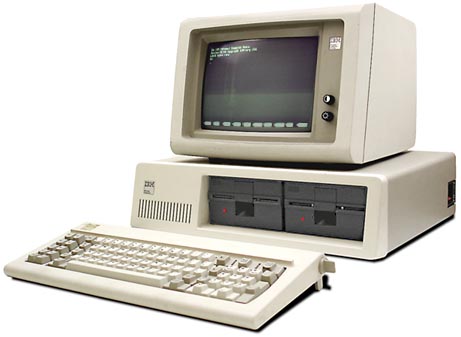

Listening to "The Continuing Story of Counter-Point", I feel this enthusiastic fear of the future. Between the counterpoint notes lies the wonder of the computer age as it was originally felt--in the mystery of the molded plastic box sitting on the desktop, the strangely mechanical and yet knuckle-popping eroticism of a 5 1/4" diskette being inserted into its drive, and in the shadowy mystique of green graphics splayed across the black mirror of a CRT screen. In those interior circuit boards, entire metropolises might very well exist, their uncanny future of dystopic speed a vision of our own, understood in fantasies of digital ghosts and disembodied electronic doppelgangers, dreams of viruses not even microns in length because their existence was in the ideal reality of information, and of sentience so new as needing to be taught to speak. A potential deux ex machina to the human race, simultaneously the prime mover of the next; these are the haunting specters not of old crimes, but of potentially apocalyptic dreams. The only thing worse than the memory of past misdeeds is the oracular-telephony--Oedipus' new technological prototype--the uncanny digital-prolapse in knowledge of the unavoidable fate of the future's cataclysmic desires.

Listening to "The Continuing Story of Counter-Point", I feel this enthusiastic fear of the future. Between the counterpoint notes lies the wonder of the computer age as it was originally felt--in the mystery of the molded plastic box sitting on the desktop, the strangely mechanical and yet knuckle-popping eroticism of a 5 1/4" diskette being inserted into its drive, and in the shadowy mystique of green graphics splayed across the black mirror of a CRT screen. In those interior circuit boards, entire metropolises might very well exist, their uncanny future of dystopic speed a vision of our own, understood in fantasies of digital ghosts and disembodied electronic doppelgangers, dreams of viruses not even microns in length because their existence was in the ideal reality of information, and of sentience so new as needing to be taught to speak. A potential deux ex machina to the human race, simultaneously the prime mover of the next; these are the haunting specters not of old crimes, but of potentially apocalyptic dreams. The only thing worse than the memory of past misdeeds is the oracular-telephony--Oedipus' new technological prototype--the uncanny digital-prolapse in knowledge of the unavoidable fate of the future's cataclysmic desires.SF felt this wonder mightily, and imbued their creations with its aesthetic of future trauma in the language of the present tense. In David Borden's oscillating score I hear the insinuated anxiety of flying above Blade Runner's Los Angeles, the diving rhythm more than compensating for the lack of visual flames, and the steady drone of Vangelis' own synth compositions radiating from the environment. In this world the advertisements are the only visible feature in the sky, and the synth warms us to the concept of new diseases, the synthetic frailty of life we are currently in the process of inventing.

I can feel it also mirroring the excitement and tremor of coming events, like in the opening subway ride of The Warriors. Barry De Vorzon's synth in this sequence is the teenage push, the libidinal id overly prevalent in these futures, because regardless of whether or not we are aging rapidly or finding eternal life, the world is constantly new to us, an uncomfortable ocean of emotional triggers we will never learn how to respond to properly, because the hormonal imbalances brought on in the desiring-milleau of the future can never resolve themselves to a millenial cosmos forever on the first-day of its unfolding. The guitar riffs may be the same, but they'll be heard looking out of the front of a high-speed subway car, looking down into the darkness of the tunnel ahead. In this future, everything will always be violently different.

Even in the misunderstandings of the technology we find the same aesthetic. Take Tron, Disney's paradigm of anthropomorphism. The humanity of computing components aside, this world is painted in glowing luminosity on its edges, but its planes are composed of the dark void. Power is rampant, though it takes the storybook guise of the evil sorcerer of old. In this current-future we live above entertainment arcades, working on designing what plays out below, even entering this circuit system ourselves. But whether the ligature of the circuit actually is the highway it resembles, we are still in the system--and isn't this the source of our anxiety, and the basis of our charge of thaumaturgy? Computers are the demons we so welcomingly invite into our homes. Journey may grab the title tunes in the soundtrack, but Wendy Carlos' synth score is the main-stream nightmare, Disney-fied.

The paranoia finds its climax in Terminator. Nothing more than a B-grade stalker/shooter on its face, we are forced into the depths of speculative fiction to think about the cyber-time'd cyborg sex roles between human and machine. Anxious, murderous death represses the orgasmic little death in this film, as red-eyed, mindless pursuit replaces our truly dangerous desires of fusion with machines. The dance club is called, "Tech-Noir", and the fashion-stagnant crowd dances to the synth beat in a despicable love of newness and consumer technology, while meanwhile the machinery underneath the flesh stalks its female victim, naturally attempting to forestall the the future fecundity of its womb. This might be the caricature, and the tag-line bringing them into the theater. But when you watch the chase scenes, seeing bullets traded between these species from the windows of the now archaically-huge detroit dinosaurs, flying under one present's Los Angeles circuit board of highway structures, molded in history's undestructibly cheap concrete that will one day form merely the rubble, like a beach of our smashed skulls, the synth takes on the aspect of pure fear, pumping from the speakers like adrenaline into our blood stream, emoting the danger and darkness of 80s LA with thermonuclear blast punctuated by a laser's harsh glare.

This is an synth-aesthetic that is now dead to us. When we view these representations of the future, they seem retroactively passe, trapped within a time-period of hairstyles, pop music, cars, and obsolete technology.

But maybe this technological-consciousness is not obsolete, but simply changed. Our technology now serves a different role, prompting us to explore nowness, rather than the future. Our technology is developed and designed to blend in, and to form a seamless element of our consumer environments. The aesthetic replaces function in many instances, rather than stemming from the function, or its future-potential function. We no longer look at disk drives as a remarkable fusion of the mechanical with the ideal. Now we design our flash drives to look like other things. We don't hunch in front of work stations, cramping our bodies around the square physicalities of glass-enclosed electron guns, relying upon our imagination to translate text into space. Everything is ergonomic, designed with clean lines and recessed flat screens, fitting in the pocket as easily as a neoprene bladder, becoming flat and light, meant to blend in with its surroundings rather than be the component center of attention. The electromagnetic consciousness has been reduced to nothing. Now we expect our machines to survive drops to the floor, and being dunked in a latte. There were days when we purged our workspaces of all electromagnetic interference, from too-large electric motors, to tiny refrigerator magnets and toys. We were conscious of fields, and their effect upon the data. Magnet data extended its importance into a presence, a scientific element of the environment, and storage material was treated with a necessary respect. Now this is outsourced to server farms, almost never touched by human hands--to the "cloud", the ancient coupling of existence without weight, bespoken of the Olympic firmament, rather than the world we inhabit. It isn't humans that have been isolated, enslaved, and reduced to their usefulness like slaves. We have enslaved the computer, and destroyed its humanity.

We have removed its life-blood, we have reduced its sense of speed. They grow faster all the time, but now in invisible ways, beyond the scope of our keeping pace with them. The speed must be measured to be understood, rather than felt through the clicking of servos and circuit diagrams. The chase is over, and the war is won. The power of information conjured from thin electricity is now about as mystical as running water, a din covered over in the background, which we can turn up our headphones to avoid. The clicking of the hard drive was the sound of a heart beating, but now a good machine is a quiet machine. Synth no longer stands out, imbuing sound with a bursting fullness of modulated frequency, but forms the back beat, made ambient by the continuing presence of fashion and character, that everpresent reality of commodified music, technology's intrusion into this field no more than a passing fad of notice, easily outsourced to samples and re-instrumented hooks.

Computers do not stand in counterpoint to humanity any longer. This surfacing current has been smoothed over, pushed back into the desiring-flow of culture, resurfaced only reshaped and appropriated, as a bit of kitch, or a example of the pace of history: a beginning point for the graph of Moore's Law. The alien quality of the digital was once its allure, but now it is a measure by which it can be placed into dead history.

Luckily, counterpoint itself seems to be a technique never fading in the human sense of aesthetics. The rebounding, echoing repetition of contrasting patterns is hardwired, we might say. The one to our zero, perhaps. Or maybe the zero to our one. It is no time at all to wait for its next instance, when the accelerating rythym swings back the other way, bathing us once again in that uncanny green glow of the free-radical of time.

Until then, we still have David Borden's music, if you can find it.

6/16/2009

Purple is for People

Here's the deal about Iran:

It's tough to feel about it. I mean it is tough because there are such conflicting feelings for me, I have trouble isolating them for the purpose of explaining them, or forming any sort of theoretical guidance on the basis of these feelings. Not that anyone is asking my opinion, but this is precisely it--there is no lack of feelings, but instead, a wide flow of them, making it difficult to say nothing, and perhaps even more difficult to say something.

It seems that most Western media has turned "green", often literally changing their website colors to the color of Mousavi's supporters. This is an easy position to take, all the more so for people from the part of the world already opposed to Ahmadinejad. Being Western, and being generally, or at least newly-neo Liberal, it is easy to look at the opposition to the non-democracy as a vote for democracy. A voice against a stolen election is a voice for fair elections. No?

But what are we looking at here? At best, a journey from worse to bad. Mousavi does not represent justice necessarily, nor democracy, nor a new or free Iran. He is part of the regime. He has already ruled in Iran as prime minister, during the Iran-Iraq War, over the course of which 500,000 people died. I'm of the opinion that any leader who controls a country in fighting a war is not a leader at all.

Of course, I side with the anti-statists, the anarchists, and the opposition to genocide by states against people. I differ in this opinion from a lot of people, some of whom back America in such choices, in addition to any number of other "democratic" states. I take the strange position that any entity occupied in killing people for the sake of controlling them is bad for humanity. Therefore, as long as this occurs, the people are not free.

So when I see video like this, I become understandably upset.

As the man said, when I see a cop beating a worker, I know what side I'm on. Same thing goes for militia men shooting at people on the ground.

If I had to join a political entity, I would join Médecins du Monde. Started by Bernard Koucher after he left MSF. "The associations provide care to the most vulnerable without regard for sex, age, religion, ethnic origin or political philosophy."

Not to support democracy, not to provide one vote per person. To always support whoever is the most vulnerable. No countries, no gods, no masters. Only humans.

The liberal mind, on the other hand, looks for a body to join. They want a side, or a cause. I've already heard, on NPR's All Things Considered this afternoon, comparisons between Mousavi and Obama. When asked for evidence the election was fraud, the analyst said (I paraphrase) "It is like assuming that Obama would not carry the African-American vote." Wouldn't it be nice if every situation had a good guy and a bad guy, and all it took was to change the color of our Twitter avatar to the correct one?

I'm seeing a new sort of democracy, which I won't call a Twitter democracy. Let's instead call it a trending democracy. Less of the old left/right distinction, and more of the even older "go with the flow" distinction. Americans die, and we hang up a flag. Iranians die, and we hang up a green one. We boil down tragedy to a consensus of blame, and then make sure we show ourselves as against this blame. We, as good people, are against tragedy. For nothing, but against badness. It doesn't matter who it is, as long as s/he opposes this tragedy, and we will follow him/her right into the next one.

I don't know much about this Mousavi. But I do know that even if he won, Iran would still not be a democracy. If they can shut down the media, kill people in the streets, and act as they have, they are not a democracy. And even if Iran was America, the people would still not be free. Each motion forward is only as good as the number of people who are killed because of it. Greater than, less than, and equals to.

6/13/2009

Quick Bit O' Remix

-They are quite entertaining.

-They appear to have a considerable amount of time/skill/effort put into them.

-They are great examples of how a remix can be more than "jacking a beat", and producing something quite original and different than the source work.

Especially the second. I've done a bit of video editing work, albeit with a crappy program, and I was amazed at how long it took me. So, I tip my hat at all these artists, for their skill.

You have probably seen a few of these floating around (most have YouTube view counts in the hundreds of thousands) but in case you missed any, here is a little Welcome to the Interdome video sampler. Enjoy!

A song composed almost entirely of clips from Alice in Wonderland. There are more by the same artist on YouTube.

The trailer re-cut is somewhat of a genre on YouTube, stemming from the professional editing competition that brought us "Shining", some years back. The offerings are generally amateur now, ranging from the specious, to the shakily awful. This one however, is pretty good, because it totally reworks the original movie, without going to the obvious, "make it a horror movie" route. It's minimal, but it works.

Because the movie trailer is itself a genre, there are many fan-based trailers floating around there. I posted the various Tintin trailers a while ago, but here is one for The Green Lantern made almost entirely out of footage of the actor from other films. I guess this gets easier the more comic films are made.

I'm not a huge fan of the Brat pack, but you have to admit this video is a pretty catchy homage to the somewhat silly dance sequences of these movies. It also makes me wish there were more musicals. Real musicals, not high school musicals. Call me when there is a cyborg reincarnation of Gene Kelly.

A mind-blowing, if some what car-sick inducing bend of AiW to Three Six Mafia. I guess Disney animation just really screams for modern appropriations (and they are certainly no copyright angels, when it comes to remix and reinterpretation!)

6/11/2009

Resistance in the Flesh

Lebbeus Woods' blog is a rare one, and a favorite of mine. I don't always understand what he is talking about, but more often then not I get something to think about. I don't rush to his blog in my RSS reader, scanning through it quickly the way I do some of the mammoth feeds out there. I wait until I have some time to sit and read, more like a traditional magazine article.

The post "Architecture and Resistance", is one of the best things I've read in a while. He begins with a short thought or two on the concept of resistance in architecture, and then proceeds into a "resistance checklist", which is stellar.

Normally I very much dislike such reductions of so-called wisdom into tiny, petit fours bites. This is a very popular way to gain "wisdom", whether it be page-a-day calendars, quotes, or self-identifying

distillations of knowledge into warm, brothy nothingness (the ubiquitous Chicken Soup method), which seems to equate knowledge and wisdom with anecdotes so tepidly coddling as to lull the reader in a sense of warm, christian/humanist self-satisfaction that couldn't be shattered with a steel pole.

This resistance checklist is none of that, instead bringing with it more of the black humor aesthetic of situationist graffiti. It is a checklist that is not meant to be checked off, as if accumulating any number of these items and claiming them as one's own would somehow create a positive status or identity. Some are unlikely, others impossible, and some are probably contradictory. Some are minor, and others would be so life changing in actuality that they seem to be at least somewhat tongue-in-cheek, if not actually ironic.

Of course, this comes off not as a light garnish of critical thinking, but as a fire hose. I suppose something could be said about the teaching of contrariness, obfuscation, and paradox as a key theme of resistance itself, and certain items on the checklist would carry this idea. But let's not get too situationist here--there may indeed be love underneath the cobblestones, but this doesn't necessarily make tearing up the Paradise Parking Lot the embodiment of resistive action.

I tend to think of these as something a bit more sublime than hurling newspaper boxes through Starbucks windows. More like small prayers, or syllables of mantras, that must be said ten thousand times to actually mean anything. And I don't mean to head east as an opposition to the street poetry of late capitalism--we could take as model the paradigm of what we think we resist, instead of the nihilistic rejection of all that symbolizes the hate we hate. So, rather than any sutra, how about the Book of Revelations? How about that text, cited again and again as rationale for neglecting the environment, bombing the ____, or the inherent violence and war of the world? What knowledge lies here?

And yet its not my intention to get into a theological discussion here (though often my roads lead there), but to say more simply--sometimes you have to read the part about the dragon about a hundred times before you really get it. Is it the devil? Is it the federal government? Who knows. More likely than not, the things we are totally convinced are completely symbolic are actually anything but.

Which gets me to a particular point on the checklist, which stood out to me like letters in flame.

There are plenty of great ones, which I like either because I whole-heartly agree, or find them especially challenging. But this one:

"Resist any idea that contains the word interface."

spoke to me exceptionally loudly. Maybe it was simply because I was researching Flash-based eBooks that day, and compiling notes for a presentation to someone about them, and I had written the word "interface" about 76 times in five pages. Or maybe because I've never really liked the word at all. But it got me thinking. Firstly, the sentence says not to resist any ideas about an interface, but to resist ideas that contain the word "interface". This is an interesting distinction. Maybe we could say any idea that needs to explain "where the amazing interface be at" is clearly a bad idea, because an interface should be immediately apparent, if it is to be an interface. It's like labeling the go button with a sign saying "go button". Idiotic, and essentializing of function over form.

But then, we might note the obvious paradox--this checklist item does in fact contain the word, "interface". Is this all a joke? As simple as: "do not read this sentence"? Or are we to take some ludic bit of knowledge away from this; maybe we just "missing the interface for the words" as the saying goes? Maybe we are studying things too deeply.

I think something different--or at least, this is the knowledge I got out of it. I think it is a warning to resist all interfaces. Resist any idea that contains the word "interface", is a admonition to resist ideas seeming to require interfacing. In other words, resist any sorts of logical wranglings that might lead one into definitional arguments about semantics, or stoic discussions of paradoxes starring heroes and animals.

The sort of philosophy that cares about hypothetical, universalizable cases is one that tries to interface to life, by way of allegory, metaphor, and analogy. In any given Socratic dialogue we learn an awful lot about what "wise men" do versus "foolish men", or what gods are like, but very little about actual life. Yes, this is the birth of the canon of philosophy, but it's thousands of years old! I love allegory and analogy as much as the next writer, but please. You can only read Plato so many times.

These sorts of ideas--of logic, of comparison, and of Venn diagram dynamics--all require an interface to work them. They are compatible with circuitous diagrams, and universal, "all or nothing" statements because this sort of thinking, of the abstract and the general, is the interface by which we can access certain types of knowledge. This does not mean that it is false knowledge, or somehow faulty. But it is hardly all there is, even though it is often taken to be such, and therefore, we resist it.

The same thing is true for any interface. Sure, we can operate any number of gadgets via an interface, but as soon as it breaks down, we kick it in the chassis or slam it against the counter. Beyond all of the interfaces ever designed, there is the maximum interface: the systems flowing through the hands and the feet, the eyes, the mouth, the ears, the skin, the tongue, the penis, the vagina. Wired directly to the brain. So maximally interfaced, there is no way to get around it, no way to hack over and above, but only through--the Flesh Circuit--already bent forever, humming vibrations of feedback without

a power source.

You may not be down for elan vital, but still, it exists and persists. Maybe you're not a mystic, but you can feel the drone of the universe. Even dead flesh still has a taste. Even lifeless substrate registers a feel. There is no incompatible format. There is only incomprehensible data. And it isn't just background noise, either. The unconscious is the substance of ones and zeroes, the basic data set, and the presence of circuit, broken or not. Resistance is feeling, the potential of blocked flow.

So you can keep the interface--the easy to understand, the attractive, well-designed, user-ready, plug-and-play, all-inclusive. The Chicken Soup for the Consomme-Soul. The Designer Revolution for Party People. Better yet, is the inner, constant resistance--the idea that cannot be contained with an interface.

Selected Ambient Works Vol. II doesn't even have song titles. How's

that for interface!

6/08/2009

In Which We Get Depressed By Looking at Graphs

Work is always shitty because it's not what I want to do. What I want to do I can't get paid for, and anorexic bohemians and dharma bums aside, it means I have a day job. My day job could be worse, in fact, its probably the best job I've ever had. But this doesn't change the fact that it is not what I want to.

And what I want to do does not include selling my hand-blown glass bongs, or visiting monasteries, or anything like that.

What I want to do has changed a bit over the years, but it has always involved producing.

At first I wanted to teach philosophy, and I went to school for this. I finished school for this. I didn't earn a PhD, so now I can't teach for this, but I did get a Master's Degree, which means absolutely shit.

Here's a graph of median incomes and employment rate against education level, from BLS. I cribbed it from Calculated Risk, my #1 source for graphs.

Well, that's depressing. I have the third highest grossing education level, but my average weekly pay is less than the median for high school graduates. Furthermore, I write two checks a month to banks, because I went and got educated. Goddamn, when I think what I could do with $1200 a week. I could buy a house. I could get a new car. I could work half the year. I could save money. For what? I don't know. Something really good. I could even afford to have a kid if I wanted to.

I stopped moving down the "teach philosophy" road, because I had idiotically entered grad school under the assumption that unlike undergrad, grad students would actually be interested in the material for reasons bigger than the "I own this knowledge" possessive approach, or the "I want to succeed for succeeding sake" professional approach. Maybe, like me, they would think the critical power of philosophy should be turned into a massive engine, swinging around the direction of education and the human species in general, and maybe even breaking a few chains while we were at it.

Actually, most of them weren't even very good at drinking, though this was something they claimed they really were good at.

Not that I am opposed to a little hard work. I've always been ready to take up a challenge, argue the underdog position, etc. And since I decided to quit academia and fight it out on my own, I think maybe I am a bit too interested in hard work. What I really dislike is willful lack of desire to make a difference. This is all over the academy. Education is a sinking, sinking ship, and nobody cares. Activism is for causes only--and higher education can't fathom causality any further than what it takes to gain acceptance.

So now I'm off, writing on my own. When I got here, I was wondering if I should even go for it. But now as I see all these other people out doing it on their own, I'm more motivated. I guess I'm not the only one, right? I'm not totally crazy to want to do what I want to do--to make high quality material, and maybe earn a living doing so.

I guess. Until it seems "earning a living" is itself crashing.

Perhaps it's still my fault, for some sort of optimist's idiocy, thinking that some sort of labor conditions might be possible under which those of us who are actually willing and able band together, and make something under our own control, and distribute it to interested and thankful folks. It's starting to look like the only people who can actually get this together are Food Not Bombs. Food Not Bombs may not be able to cook too well, but every time I've ever been told they were going to be somewhere, they were, with absolutely free, and often hot food. Delivering on the promise, every time.

I was reading this book review in BookForum about Andrew Ross' new book about the failures of neoliberalism in the work world. This part caught me:

"Under neoliberal economic rule, Ross maintains, a perverse trickle-up dynamic has taken hold: Contingency and upheaval have spread upward from low-skill, low-wage labor sectors in the global economy. The condition he describes as “precarity” now cuts widely across class, occupational, and geographic divides. What differs is how it is experienced: as freedom and autonomy in a subcontracting biotechnology lab or as “flexploitation” in a sneaker factory. In this new set of labor arrangements, Ross argues, the artists, designers, writers, and performers of the “creative class” occupy a “key evolutionary niche on the business landscape.” Casual labor is now commonplace in glamorous or highly paid creative fields, from filmmaking to software design. Such well-rewarded occupational niches have doubled as virtual infomercials for the cool, humane, and service-driven appeal of neoliberal economies as industrial capital has continued to take flight. “Cultural work was nominated as the new face of neoliberal entrepreneurship,” Ross notes, “and its practitioners were cited as the hit-making models for the intellectual property jackpot economy.”

Oddly enough, Ross points out, “the demand for flexibility originated not on the managerial side but from the laboring ranks themselves,” most notably as part of the “revolt against work” that plagued managers in the early ’70s. To alleviate workforce anomie, managers drew employees into the decision-making process, made work schedules more flexible, and sought to liven up alienating workplaces with a range of feel-good activities. But these came at a price, Ross notes, as managers introduced greater job risk while casualizing the basic terms of work.

As industrial employment continued to hightail it to points south of the developed Western world, this “two-handed tendency” remained in place, Ross writes, reaching its “apotheosis in the New Economy profile of the free agent, when the youthful . . . were urged to break out of the cage of organizational work and go it alone as self-fashioning operatives, outside the HR umbrella of benefits, pensions and steady merit increases.” Risk aversion had been the old economy’s fatal flaw. Risk appetite was the new economy’s badge of virtue."

And you know, it's true. Reading about the graphic arts industry, or the writing industry, or any other "creative" industry, you get all these people talking (and no doubt, blogging) about how it's hard to "go it alone" as a freelancer, but in the end, "totally worth it." Of course, they can do it, no problem. Because they were so creative in the first place.

This is the thing--we think we've let the air out of the oneupmanship, the individualist corporate hierarchy, the big mountain of caterpillars climbing other caterpillars. But really, its been so solidified, nobody even realizes it. Why are you a "creative"? Because YOU are so creative. Why are you making it? Because YOU are making it. Because YOU know the secret to viral literature, design, or whatever your creative skill set happens to me. Everybody is just so damn creative, all the time. And they are fighting each other for the freelance money thrown out like corn to chickens.

I often find myself refusing to take part. I'm not one to stand in lines. I wait until everyone else has gone in, and then I go. If there is limited admission, then I get there early enough to beat the line. If I can't get there early enough, then fuck it.

Why is this the way it is? Are there really already so many people on earth that talented people can't make a living at what their talent? Or is our system of making a living totally fucked? Whatever happen to the unions? Say what you want about technology, the definition of a living wage, strikebreaking, or the five day work week, but what ever happened to the spirit of collectivity? They used to break unions on race and nationality, because it took deep-seated self hatred to break the collectivity. Now they just tell you you're an individual.

You're so talented, you are better than all the rest.

You're work is so much better, you have nothing in common with them.

You're creative, you're different than the workers who are guaranteed payment for time and effort.

You're unique, and don't need a union.

You have potential, and you could get rich, so don't worry about heath care or retirement.

People always think unions are for the lowest, the greatest common denominator. The people who break rocks with their hands are the ones who need unions. The anybody and everybody who can't get anything better, who need to be protected just like the old need medicare.

Well, they do need unions. But unions are for talented people too. Skilled laborers, they used to call them. People who work, but work creatively.

I've been watching the second season of The Wire, and it has got me wanting to be a longshoreman. Those guys, they are in a dying industry, feeling the push of technology on one shoulder, and condo developers on the other. But despite that, they never think about deserting the collectivity of the union.

It's not even about the union itself, or the bargaining, or the organization (though benefits would be nice). It's about the work itself. It's about working on something and putting real effort into something and know it's not all for nothing. It's about knowing you have a place to work every morning, and a supply chain into which to put your product. It's about knowing quality is appreciated, and not just tolerated. Maybe you can get these things on your own. But most likely, you don't.

I wish there was a local writer's union. I wish I could apply for membership, show them what I've got, and then they could say, "You're in. Here's your card, and we'll call when we've got work." Or they might say "Beat it, pal. And don't let us catch you scabbing, neither." That's fine. Then I'd amble on down to the next union I've got some skills for, and see if I can earn a living at that. Instead, I feel like one of the horde of potential miners standing outside the gate, wondering if chance will let my family eat or not. Everyone's so supportive of everyone trying to be creative, but they're only going to give you a little bit of generalized "support", not anything that will really help. Because after all, this is a competition. Any maybe, when it comes down to it, they can't really give it anyway.

I put my work on the Internet, and at least a few people look at it, so thank goodness for that. Kind of like digging a bit of coal out of my own backyard. Otherwise I might be holding people at knife point in the park and forcing them to read my essays, which is probably not the best place to win readership.

That's for free, of course. I don't have a problem with that. Many people don't. Maybe everything will be free, eventually, and business will become a niche at best. I can dig it. But I hope real estate, food, and medicine hurry up and catch up with the creative industries. I'm hungry.

The union better call soon, or I'm never going to finish this goddamn novel.

6/07/2009

Twitter, Semiotics and Programmatics, and Running out of Characters

The goal it seems, is to develop conventions to increase the use of symbolic language within common language, to increase the way the firm 140-character limit can be used. Here are some pulls from some of the various interested parties out there:

"[O]ur goal is not to turn Twitter into a mere transport layer for machine-readable data, but instead to allow semi-structured data to be mixed fluidly with normal message content." (http://twitterdata.org/)

"Nanoformats try to extend twitter capabilities to give more utility to the tool. Nanoformats try to give more semantic information to the twitter post for better filtering." (http://microformats.org/wiki/microblogging-nanoformats)

"These conventions are intended to be both human- and machine-readable, and our goal here is to: 1. identify conventions in the wild, as users or applications begin to apply it.2. document the semantics of the microsyntax we find or that community members propose, and 3. work toward consensus when alternative and incompatible conventions have been introduced or proposed." (http://microsyntax.pbworks.com/)

Very interesting! Besides the cool buzz words like "nanoformat" and "microsyntax", which are just itching to be propelled into circulation by the NYT's tech section (after which I will hear them again from all the publishing blogs), I am captivated by the goal of sematicization of content for people and machines--equally and fluidly. This is some cyborg shit, here.

The explosion of content in Twitter has created a need for programs and applications to help parse the data, to keep it usable. One can only follow so many people, and with the increase of users and the increase of posts we quickly reach a saturation point. As the Twitterverse of apps taking advance of the simple Twitter API grow, this saturation is compounding upon itself, and Twitter is becoming less of a site, more of a service, and even, little by little, a format.

The Internet has given substance to all sorts of linguistic structures, from the densely complex (at least to the non-adept) programming languages of Flash, Javascript, etc to the slightly more accessible "read-only" HTML, to the linguistically simple email, and even to the real-life-human-interface replicators of video/voice chat. However, each of these seem to find their place on one side of a categorical boundary, which I will call the signification language/programmatic language boundary. I'm about to launch into several of these categorical boundaries—which are somewhat dense distinctions of theoretical concepts, which often overlap as much as they differ. However, because semiotics, or the study of “meaning”, is about these very distinctions, I use them as diagrams or illustrations to try and get closer to a certain sense of meaning which I believe is relevant to the conversation.

Signification language is, simply, all common language and syntax as we know it, being that we are thinking, speaking, understanding humans. This is language built from signifiers, intending to reach the signified, or some ideal variation thereof. It is language which, as we know it, attempts to "mean" something.

Programmatic language, on the other hand, is still built from signifiers, but not intending to relate to the signified directly. Another way to put it is that programmatic language does not have pure content. Programmatic language is built from signifiers which are meant to interact, and thereby perform a linguistic function to content, but this content is separate like a variable, and therefore kept categorically separate from the rest of the signifiers with programmatic meaning. What I'm saying in a round about way, is that this is a programming language. You cannot speak Flash. You can know Flash, and by compiling and understanding it via a "runtime", interact with content in various ways. The content is what is being spoken and understood, but being spoken and understood through Flash.

(This would be as good a time as any to remove any remaining doubt, and admit that I have only a basic understanding of simple programming. However, I believe I understand the concept enough to talk about it, at least from a semiotic standpoint.)

A good example of the programmatic is Pig Latin. It takes a language that does mean something, and converts it programmatically into a new form, which can easily be understood by anyone who can parse the program. Another example is the literary tool known as metaphor--anyone who can parse metaphor knows that it is not meant literally, and therefore he or she is able to easily search the surrounding content for the analogical terms of the program: A is to B as X is to Y. Logic is another sort of program; gold is yellow/all things yellow are not gold—this has meaning because of a way of understanding how it means, not only what it means. And so on. In fact, it might be said that the rules of grammar and syntax for our signification languages are themselves a programmatic component of signification, and this would not be totally incorrect. (And here is the overlap of the categories.) We are not reliant on grammar and syntax to signify, but for those attuned to the programmatic language, it transforms the content and allows it to have a new dimension of meaning: a new how it means. This new dimension, though not always being dogmatically utilitarian, is always related to use. Language is the use of language, whether in the act of signification, programmatic interaction, or wild, totally incomprehensible expression.

There is another concept I'd like to throw into the mix. This is the duality between free-play, and universalization. It is, like the signification/programmatic duality, not exactly mutually exclusive. Free-play is mostly related to signification, because it occurs in the act of signification, along with intent. We gain new signifiers and meanings by a poetic play of the signifers. Universalization works in the opposite direction. By forming a hard and reproducible definition of a concept, word, or action, we can ensure that meaning will not mutate, and anyone who avails themselves of this definitional quality can be reasonably sure the meaning can be established between various people, unified by the universalization of the concept. A certain amount of both of these occurs in all language, but signification can be almost entirely free-play (e.g. “You non-accudinous carpet tacks!”) and programmatics can be nearly pure universalization (e.g. “def:accudinous=0”). However, signification must also contain a great deal of universalization in order to mean anything more complex than simple emotional outburst. And programmatics contains free-play as well (everyone knows programming is quite creative, despite the stereotype). It is the difference between these two ideas that gives them their power--not their exclusivity. To take the Pig Latin example again: one could easily write a program to translate a poem into Pig Latin. It's strictly universal, and accurate. But could one write a program to translate poetry, and maintain its poetic play? Much more difficult. But try employing a poet to translate things into Pig Latin. It might work, but you'd be better off with a program that can streamline the univeralities.

There is another concept I'd like to throw into the mix. This is the duality between free-play, and universalization. It is, like the signification/programmatic duality, not exactly mutually exclusive. Free-play is mostly related to signification, because it occurs in the act of signification, along with intent. We gain new signifiers and meanings by a poetic play of the signifers. Universalization works in the opposite direction. By forming a hard and reproducible definition of a concept, word, or action, we can ensure that meaning will not mutate, and anyone who avails themselves of this definitional quality can be reasonably sure the meaning can be established between various people, unified by the universalization of the concept. A certain amount of both of these occurs in all language, but signification can be almost entirely free-play (e.g. “You non-accudinous carpet tacks!”) and programmatics can be nearly pure universalization (e.g. “def:accudinous=0”). However, signification must also contain a great deal of universalization in order to mean anything more complex than simple emotional outburst. And programmatics contains free-play as well (everyone knows programming is quite creative, despite the stereotype). It is the difference between these two ideas that gives them their power--not their exclusivity. To take the Pig Latin example again: one could easily write a program to translate a poem into Pig Latin. It's strictly universal, and accurate. But could one write a program to translate poetry, and maintain its poetic play? Much more difficult. But try employing a poet to translate things into Pig Latin. It might work, but you'd be better off with a program that can streamline the univeralities.So, the goal of microsyntax (I'm just going to choose one term and stick with it) is to create a certain amount of universalization of programmatics, such that the content of Tweets can function programmatically, to better increase the quality of the content in the form. However, there is also a strict attention to maintain the programmatics within an overall format of free-play signification. This seeks to maintain wide use, ease of human understanding as well as computer parsing, and to maintain the free-play aspects that have made Twitter so popular.

The reason I have bored you with all of these mutated semiotic terms, is so I can explain just how interesting this goal is. I can think of very few attempts to institute such a composite of signification and programmatic language in our linguistic world. There are plenty of overlaps in daily use of language between these concepts, though no defined interaction between them as a goal. There are some abstract examples where the goal is implied. --World of Warcraft, or any other MMORPG, for example, is a combination of a signifying social network with the programmatic skill set of playing an RPG. Of course, the programmatic aspects of the game, once mastered, take a formulaic back seat to the social, conversational aspect of guilds and clans. You can even outsource your gold mining to Asia, these days.

So Twitter is at least somewhat unique in that developers of microsyntax are taking into consideration the fact that the programmatic will be bonded and joined, fluidly, with the signification language of the medium. These are programmatic techniques developed for the user. Basically, we are asking IM users to learn rudimentary DB programming--and expecting them to do so because it is fun and useful. If you don't see this as fairly new and quite interesting development, then you are probably reading the wrong essay.

So what is unique about Twitter that is causing this interesting semiotic effect? What is it about this basically conversationally-derived medium is causing us to inject it with programmatics?

This is what it Twitter does--it takes text messaging, a signification language, and adds some programmatic features. First: a timeline, always (or nearly so) available via API. Second: conjunction, i.e. the “following” function; one can conjoin various accessible timelines into one feed. Third: search; one can search these timelines, within or across following conjunctions.

But these features are not within the signification matrix. The timestamp may be metadata, but the availability of timelines, follow lists, and the search are only available via the service framework and its API. Without the service's presence on the Internet, few people would be using Twitter, because even if you can follow and unfollow via SMS, how are you going to decide who to follow without search features and the ability to abstract the conjunctions by peering at other people's timelines? You might as well be texting your number to ads you read on billboards, trying to find an interesting source of information. With the web app, you can actually use the service as a service, and utilize its programmatics to customize your access to the content.

So how does the programmatic features begin to enter the content? I think it is because of the magic number 140. Because of this limit, the content is already undergoing some programmatic restraints to its ability to signify. Like in an IM or an SMS, abbreviations and acryonyms are used to conserve space, while still transmitting meaning. But this is a closed system; this bit of programmatics continues refer to the content. The interesting thing about Twitter is that the formal elements of the program within the text can reach out of the content, to the program of the service itself, and then back in to the content. In this way, it is completely crossing the barrier between form and content--not just questioning the barrier or breaking it, but crossing it at will. Because the content is restricted to a small quantity, around which the service's program forms messages, we are left with a thousand tight little packages, which we must carefully author. They are easy to make, send, and receive, but we have to be a bit clever to work within and around the 140.

This is a third semiotic category differentiation: the “interior” of content and the “exterior” of its programmatic network. As far as users are concerned, most Internet services are entirely interior. You create a homepage, or a profile, and via the links and connections this central node generates, you spread and travel throughout the network. You can view other profiles, but only via the context of having a similar profile. These other services are entirely interior, because it moves from the center outward into space, and there is no border between the service's content, and its programmatic functions that lead between elements of content. The hyperlink is an extension of the interior, not a link to any exterior. The developer of Facebook or some other service may be able to magically “see” the exterior and manipulate it, as if s/he is viewing the “Matrix”, but the user can only see the content.

This is a third semiotic category differentiation: the “interior” of content and the “exterior” of its programmatic network. As far as users are concerned, most Internet services are entirely interior. You create a homepage, or a profile, and via the links and connections this central node generates, you spread and travel throughout the network. You can view other profiles, but only via the context of having a similar profile. These other services are entirely interior, because it moves from the center outward into space, and there is no border between the service's content, and its programmatic functions that lead between elements of content. The hyperlink is an extension of the interior, not a link to any exterior. The developer of Facebook or some other service may be able to magically “see” the exterior and manipulate it, as if s/he is viewing the “Matrix”, but the user can only see the content.Twitter is different, because the service, for all intents and purposes, is not much more complicated than the programmatics the user already must utilize. The programmatic exterior is visible, because it is such an important element of what makes the interior content function for the user. The simplicity of the 140 limit makes the junction between interior and exterior very apparent; because there is so little space for content, the programmatics are relatively simple, and necessarily very available. And because of this, the users willing to creatively explore new programmatics, to venture into this “exterior” with their “interior” content, continuing to bridge the gap, because the functionality is already bridged so often in their understanding of the Twitter language.

Here are some programmatic symbols that have proved themselves useful. @ was first find I believe (shouldn't somebody be writing a history of this?) allowing conjunctions to grow across timelines. Then (the development of which is traced by>http://microsyntax.pbworks.com), # similarly links posts into new timelines, not by user, but by subject. RT is a way of expanding and echoing content throughout new timelines, either across user-based timelines or subject-based. And then the ability of the Twitter service to recognize URLs allows the content to connect back to the rest of the Internet (and accordingly, URL shorteners, picture or other media storage, and anything else the web can hold).

All of these have been user-developed, and picked up and utilized by the wide-spread user base, thus proving their own efficacy. Eventually, the Twitter service has added their own features, recognizing these symbols as their own unique HTML Twitter tags, and giving native function in the form of links to the basic Twitter service, without requiring an app to do so. These symbols change and enhance the content of the Tweets, and allow the user to relate to and access content outside the Tweet itself, as well as interact between various Tweets in a universally understood way.

I know there are other symbols people use out there, but they are not as widespread as these I have just mentioned. This is an interesting facet of the programmatic Twitter symbol. Any symbol can intend any meaning, either through straight signification or programmatic use. But, to really enhance the Twitter medium, it must catch on. This allows it to function in the medium according to the programmatics of the form itself—the timeline, conjunctive networks, and search. If two friends have a secret code, that might provide a certain use between two people. But once that code becomes a language general enough for meaning to be intended to the broad base of users, and similarly, appropriated and used by them, then it is not a cypher, but part of a language itself. It's use will play until it develops a enough of a universal character to be available to just about anyone.

We have seen the Twitter service look out for these things and exploit/develop them, as any good Web 2.0 company should. One might call them “official”, or as much as anything about such a free service is official. Certainly, when Twitter recently changed the service such that @ responses would not appear in the timelines of those not following the respondee, this was about as official a service change as one could imagine.

This introduces another question, similar to this issue of “official” symbol universalization. We might call these questions “social” questions, because to the extent that language only occurs between individuals gathered in a mass, having the unique combination of free-play and universal programmatic meaning associated with content, the dictates of individuals does affect the language's use. Naturally, one cannot make a language illegal, or regulate its usage, but the attempts to do so will have an affect of some sort, even if not the intended effect. Social control of a language may not firmly control its meaning (content) or use (form), but it will change it certainly, on both accounts.

So the second social question(s) I would like to raise is, in addition to the effect of the reliance upon the Twitter service to adopt and officially universalize symbols' programmatic use, to what extent are we willing to base the designs of new symbols, and their open-sourced, community-driven conventions, upon a single service in a closed, controlled entity, just so happening to be a private company? I am not so interested in the intellectual property aspects (for the moment), but to develop a microsyntax for such a service is to develop a language that will be, in the end, limited and proprietary. What other services, forms, and media might the development of a microsyntax affect? To what extent should the microsyntax be limited to Twitter? To what extent will the usefulness of a microsyntax be affected by attempts to universalize or localize the language to a particular service? The size and popularity of Twitter seems to make these moot points, to some extent. Clearly a unique syntax is already developing, whether or not it is the best idea. But is “learning to speak Twitter” simply the best idea? Or should the semiotic lessons we learn from exploring microsyntax better applied to a wider range of media than simply a “glorified text message”?

I do not know the answers to any of these questions yet, nor do I really even have any idea of what sort of symbols should be included. Being the amateur semiotician I am, I have a different position to push.

The notion I would like to add to the discussion is a bit abstract, but I believe it is important. I would like to introject the concept of Authorship, for what good it may do (if any).

Authorship used to be the main source of innovative programmatics in language. Naturally, a main source of significatory content as well—but even more important than the stories themselves, were the way they were told. From the time of Homer, the author has held a significant position in language as the programmer, the prime mover, and the service provider. It was with a certain authority, a certain speaking of the subjective “I” transformed into universalized narrative, that an author was able to shape the use of language. Before the days of authors, perhaps group-memorized verbal legends were the original crowd-source.

And we're heading back there again. I'm not going to dignify Twitter-novels with discussion, but I think it is clear to say that unencumbered access to literature via digital technology is becoming more important to its consumption than the identity of the author. I don't think you can crowd-source the writing of a book per say, but you sure can't get anyone to read a book without a little bit of user-generated marketing.

But even though the author's may be a little disappointed at no longer being well-paid (or paid at all) celebrities, they still haven't lost their power over language. They have a poissance, in the “pushing”, or “forcing” sense of the world, as well as the potential. Perhaps they have lost their way a bit, and forgotten the power one can wield with a bit of forceful word-smushing (certainly folks have died for it in the past), but the capacity is still there. Authorship is a firm hand around the pen, or fingers on the keys.

I don't think this bit of figurative nostalgia is unrelated. One doesn't set up a new syntax by writing a white-paper or a blog essay—one does it by going out there and using the syntax. Proposing something is never enough; one has to use it with force, and let the force of the symbols become self-evident. If it is powerful, than it shall be. Language has developed, since the age of authors and perhaps even before, via loud shouts, firmly intended phrases, and elloquent incantations alike. We know there is hate speech, and are wary of its power. What about language with the power to build, or unite? Or simply to communicate with lightening speed—a linguistic Internet in symbols and syntax alone. I was fascinated by Dune as a kid—the idea that the Atriedes had their own battle language, a secret language only used in matters of life and death, bowled me over. No, Twitter is not a battle language. But it is some sort of new language. Perhaps, an Internet Language.

But this is the problem with the Twitter service: it is ultimately reductive, in signification and programmatics. It's that damn 140 character limit—both the source of its semiotic innovation and all of its troubles. By being one of the first popular services to define both an inside and an outside to its content (all others that think of themselves as ever-growing blobs come to look like them too), it chose too small of a box. We need more from our Internet content than 140 characters can ever provide. Therefore the wild expansion is occurring on the outside, and the Twitterverse is becoming a horribly mutated and desolate place.

The truth is, interactions with ulterior apps via programmatics and the API are near worthless. Sure, you can develop some good client apps for writing posts, keeping track of multiple timelines, and searching. But micropayments? GTD lists? Real threaded messages and chats? Media sharing? These are all hopelessly wishful thinking. Just because the service is popular does not mean you can convince everybody, or even a critical mass, to accomplish all their Internet uses through a 140-character window. All of these things exist in “large form” in their own separate interiors, and to try and shrink them into the syntax of Twitter is to squish them too much, and fill up that little 140-character box to the breaking point. This is not to say we have uncovered all that Twitter has to offer—but it is to say that most of the invention is horribly un-programmatically authored.

Twitter's power lies in its communication—in its content, rather than shoving content programmatically through an overloaded API. It delivers small, concise messages, and allows a certain amount of programmatic networking to access this content, in a brilliantly small and simple package. As authors, this is the avenue to develop for Twitter. To push Twitter and see what we can do with its programmatic content, not IPO-in-the-sky payday concepts.

Twitter's power lies in its communication—in its content, rather than shoving content programmatically through an overloaded API. It delivers small, concise messages, and allows a certain amount of programmatic networking to access this content, in a brilliantly small and simple package. As authors, this is the avenue to develop for Twitter. To push Twitter and see what we can do with its programmatic content, not IPO-in-the-sky payday concepts.But microsyntax need not begin and end with Twitter. What if we took the approach of the equally accessible interior/exterior, the content/programmatics approach we have found in Twitter, and applied it to other services, or created new services around this semiotic utility? What if rather than force all the exterior into a too-small 140-character interior, we developed an interior simple enough, say like plain text, and developed microsyntax to control the programmatic aspects of the access of this plain text in ways simple enough for any user to wield? What if a service was created not unlike email, that would route plain text on the basis of its plain text? What microsyntax could be added to email systems, for example? What about openly-readable, tagged, searchable email? Why not? Why are wikis constrained to web servers, like shadow-plays of web activity? Why aren't they linked via opt-in, streaming timeline conjunctions? Rather than storing an edit history, a wiki could be the timeline itself, constantly in atemporal motion rather than accumulating on a server. Anybody opting-in would be simultaneously reading and forming the wiki with their programmatically-intended text updates.

Twitter has also opened up the door. It has linked the programmatic with content in a way that appeals to millions of people, and could be argued to have provided real use to these same millions. Now that we, as authors wielding such methods, can see what it is doing to the usefulness of language, we have a new angle in which to push language. What other sorts of programmatic changes can we make to our content, both on Twitter, and in the rest of our linguistic world? Could we develop a microsyntax for every day speech? Certain microsyntax elements leak into speech already. What about long-form Internet writing? What symbols would improve its function, and what html tags would provide better access both inside and outside the text? What should be universalized, and what should get more free-play? Should we develop a taxonomy of tags? A symbol to denote obscure metaphor? The possibilities, and the potential, are near endless.

6/04/2009

Let My Boxcutter Be My Guide

There are also some esoteric symbols printed onto various cartons, for the purpose of telling warehouse personnel how to treat the boxes, given the contents. Some of these are obvious, others are not. Some of my favorites are the stacking diagrams--drawings of how to palletize the boxes for maximum strength, product capacity, and all while keeping the product safe.

For those of you interested in "architectural fiction", and such things, we might think of these as visual-commodity-infrastructure-planning-geometries. Or, for those of you interested in "architectural fantasy", these are packgnosis-mobiscriptural-palletamulet-mandalas.

Materials handling to Third Bardo, man!

I believe these is the old packaging of digital printer developer. Most of these are from digital printing supplies of some sort. Even from looking at the box, I have no idea what this is supposed to symbolize, other than not to stack more than ten layers deep.

I believe these is the old packaging of digital printer developer. Most of these are from digital printing supplies of some sort. Even from looking at the box, I have no idea what this is supposed to symbolize, other than not to stack more than ten layers deep. Toner, I think. The far left means stack no more than 25 high. The far right means the toner's holy halo will be visible when glorified with rays of the one true god.

Toner, I think. The far left means stack no more than 25 high. The far right means the toner's holy halo will be visible when glorified with rays of the one true god. Boxes of bottles of fuser oil. Also, the blueprints for "the endless staircase of holy knowledge."

Boxes of bottles of fuser oil. Also, the blueprints for "the endless staircase of holy knowledge." This is also from the fuser oil box. To me, it evokes twisted intestines, and the dark voids that lie within us all.

This is also from the fuser oil box. To me, it evokes twisted intestines, and the dark voids that lie within us all. More from the toner cartridge box. The cartridges come two to a box; and somehow, in the way they fit, they easily slide out of the box, but when they are empty and I am ready to take them out to the trash, I can never fit two back in the box. Maybe I don't pay enough attention in the first place. Or maybe, just maybe, this diagram depicts the boxes shrinking once the cartridges are removed.

More from the toner cartridge box. The cartridges come two to a box; and somehow, in the way they fit, they easily slide out of the box, but when they are empty and I am ready to take them out to the trash, I can never fit two back in the box. Maybe I don't pay enough attention in the first place. Or maybe, just maybe, this diagram depicts the boxes shrinking once the cartridges are removed. This is actually from a box of instant Thai soups. Which is a printing supply to me, in an abstract way. Note, first of all, the mystic hand symbol on the left, which I'm sure I saw in one of the seizure-inducing gnosis scenes in Lawnmower Man. You might also notice how they managed to fit "protect from rain and sun" into one symbol, which is more efficient than another example above. Then, although I can easily figure out that the far right symbol means, "this soup is not for peasants who still use archaic and symbolic tools," I am at a complete lost as to what the bottle means. I thought the whole point of these things was to symbolize a message understandable in any language! Does it mean, "do not consume with pure whiskey?" Or, "Made with 100% Not Holy Water?" Maybe, "Only serve in Eylermeyer Flasks?"

This is actually from a box of instant Thai soups. Which is a printing supply to me, in an abstract way. Note, first of all, the mystic hand symbol on the left, which I'm sure I saw in one of the seizure-inducing gnosis scenes in Lawnmower Man. You might also notice how they managed to fit "protect from rain and sun" into one symbol, which is more efficient than another example above. Then, although I can easily figure out that the far right symbol means, "this soup is not for peasants who still use archaic and symbolic tools," I am at a complete lost as to what the bottle means. I thought the whole point of these things was to symbolize a message understandable in any language! Does it mean, "do not consume with pure whiskey?" Or, "Made with 100% Not Holy Water?" Maybe, "Only serve in Eylermeyer Flasks?"Anybody who speaks Thai is more than invited to ellucidate in the comments.

Is that a Proxy Server, Kittycat?

This will probably slow down my rate of posting--not because I blog a lot at work, but because my long periods of computer usage, often while large documents are batch-processing or spooling to print drivers, gives me time to scan things, find pictures, or jot down notes, without affecting my productivity. This is obvious to many, but a complete mystery to certain nosey consultants. Even though they may not know what "batch processing" is, let alone anything else I actually do.

Anyway, not that it's actually a problem, or that it is really related, but using the word "nosey" reminded me of this scene from Chinatown.

6/01/2009

Letter G, I Thought I Told You to Shut the Hell Up

Pretty cool. The photos look great too, check 'em all out at the link above.

But did you think of the same thing I thought?

Yes, you did:

Looks just like the un-brainwashed view in John Carpenter's landmark film, They Live. From the cheerily bright, yet polarized black-and-white urbanities, to the all powerful, eye-capturing images translated in broadcast of lock-step, san-serif font.